Based on a Tech Talk delivered by Technical Director Richard Brown, this blog explores how to successfully implement AI-assisted engineering.

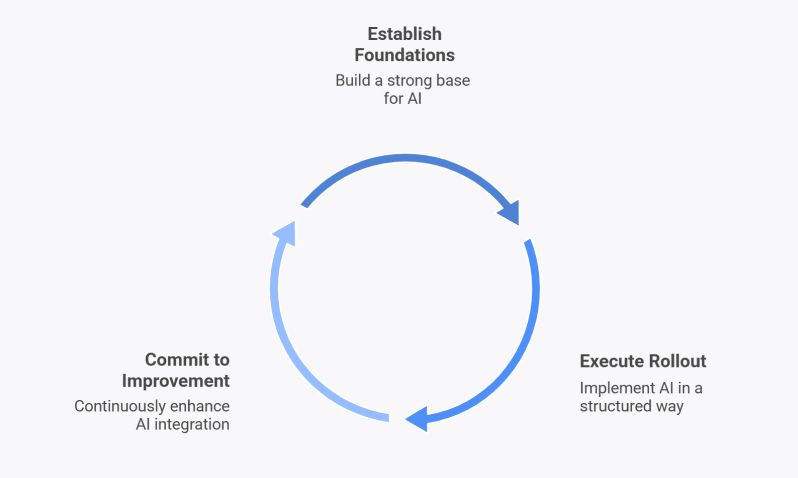

Rolling out AI to engineering teams successfully depends on three interconnected phases: establishing solid foundations, executing a structured rollout and committing to continuous improvement. Each phase builds on the previous one to create sustainable AI adoption that delivers real value. These observations come from our internal AI deployment at Audacia and supporting other organisations through similar transformations.

What are the foundations of AI-assisted engineering?

Before rolling out AI-assisted engineering, three foundations need to be in place: a clear AI governance policy that defines what people can and can't do, solid delivery practices that AI can amplify rather than expose, and documented coding standards that can be fed directly into AI tools as context.

AI Governance

Research into AI adoption across organisations reveals that providing guardrails and governance strongly correlates with successful AI adoption. On the other hand, adoption rates drop when organisations impose no constraints. Without defined boundaries, people become nervous and uncertain about what they're allowed to do.

A key first step to laying the foundations for AI is publishing an AI policy, which removes this ambiguity. The policy should clarify acceptable use – which tools are approved, licensing requirements, privacy controls and IP indemnity policies – as well as defining overarching principles that must be followed when using AI tools. The principles we use at Audacia are:

Accountability: People remain responsible for the quality and accuracy of their work, including any AI generated output used.

Fairness: AI models can reflect biases in their training data, so output should be reviewed carefully wherever it influences decisions that affect people.

Maintainability: AI-generated code should meet the same standards for readability and structure as anything written by a human.

Privacy: AI tools must handle data appropriately, with clear retention policies and care taken over what is shared with external models.

Security: AI tools warrant particular scrutiny – tools must meet appropriate security standards, and applications built with AI capabilities need proper security testing.

Transparency: AI involvement should be clear to all stakeholders/consumers, whether in code, content or decisions.

Solid Delivery Practices

The annual DORA (DevOps Research and Assessment) report recently focused on AI-assisted engineering and its impact across the software industry. The key takeaway that stands out is that AI serves as an amplifier; robust, mature practices accelerate the positive impact of AI, and poor processes provide a blocker to successful adoption.

In practice this means that if work flows smoothly through the pipeline, code review and pair programming processes are robust, testing is comprehensive and deployments are quick, AI will generally make those things even better. However, if single points of failure exist and bottlenecks plague your processes, then AI will amplify those problems too. Making sure solid foundations exist before introducing AI ensures that the right practices get amplified.

Many of these solid delivery foundations represent established good practice. For example, small batch sizes matter – including committing often, submitting small pull requests, breaking down user stories to smaller chunks, and reducing lengthy feature branches and troublesome merge conflicts. Additionally, unit testing becomes critical when generating more code faster – a comprehensive automated test suite ensures increased velocity doesn't introduce regression bugs.

Lastly, recognising that software engineering represents just one part of the overall delivery lifecycle is crucial. Speeding up software engineers achieves nothing if bottlenecks exist elsewhere in the lifecycle – if test environments aren't fit for purpose or requirements can't be defined quickly enough, faster developers simply wait for requirements or work on the wrong things. Ultimately, the entire development lifecycle needs examination, not just isolated parts.

Documented Standards

AI-assisted engineering demands documented standards – without them, there is nothing to govern the code AI generates. If your organisation doesn't have established coding standards, this is a good opportunity to document them.

Coding standards need to be communicated effectively to AI tools. Having Copilot or Claude generate extensive code that engineers must immediately rewrite or refactor to fit coding standards creates inefficiency. To address this, your organisational standards should be part of the context used by large language models generate content. Technologies like MCP servers allow coding standards to be pulled into the LLM context window, helping the model understand what 'good' looks like.

Ideally, these foundational pieces should be in place before rollouts begin – that's not always feasible, so laying the foundations and rollout can happen in parallel. However, before rollout, at a minimum, the overall software development lifecycle needs examination, and people need information about what they are and aren't allowed to do with AI.

How do you roll out AI tools across an engineering team?

Rolling out AI tools requires choosing the right tool for each team's environment, with data security and longevity in mind, and establishing benchmarks to evaluate new tools consistently. Equally important is role-specific training that sets realistic expectations – using AI effectively is a skill that doesn't automatically come with technical ability.

Choosing the Right Tools

The obvious first step to rolling out AI is giving people access to the right AI tools – such as GitHub Copilot, Claude Code, etc. Choosing the right tools for the right team requires meeting engineers where they spend most of their time. For example, if developers work primarily in VS Code, Copilot often makes sense because of deep integration. Data security should also inform this choice – tools must have appropriate terms of service, clear data retention policies and transparent privacy policies.

Beyond the initial selection, tool choice needs to account for longevity. The AI landscape moves quickly, and the right tool today might not be the right tool in six months. This makes ongoing assessment a responsibility in itself – staying aware of new models and services as they emerge rather than treating the initial rollout as a settled decision.

To help with ongoing tool assessment organisations can establish benchmarks to test against. Measuring tools against dimensions like correctness (does it meet the requirement given), autonomy (how much rework is required), quality (does it generate good code), even token usage (how efficiently does it get to a solution), gives teams a reliable basis for comparison. A representative codebase or application – something that genuinely reflects the organisation's work – makes this practical. For example, when a new model or plugin ships it can be tested against something real rather than abstract criteria.

Training and Upskilling

Alongside providing access to tools, ensure guidance is provided on how best to use them. Whether called prompt engineering, vibe coding, or something else, using AI as a software engineer is a skill in itself – and the best software engineers aren't necessarily the best at using AI, and vice versa.

Any training should present a balanced attitude to AI. Opinions tend to be polarised – hype at one end, dismissal at the other – with the truth somewhere in the middle. AI has limitations, and sometimes stepping outside the AI loop entirely is needed. Without these realistic expectations, the first roadblock an engineer faces can lead to disillusionment and disengagement.

Making training role-specific matters too. General organisational training is useful, but software engineers, test engineers and other teams each need specific guidance. Like tooling, meeting people where they are is essential. Finally, training must evolve. As new employees join and tools update, the ability to deliver ongoing, up-to-date guidance is what turns a one-off rollout into lasting capability.

How do you sustain and improve AI adoption over time?

Sustaining AI adoption long-term comes down to culture, clarity and measurement. Building communities that encourage open knowledge sharing, defining where AI works well and where human judgement is still needed, and tracking delivery velocity and quality all ensure AI continues to add value rather than becoming shelfware or a crutch.

Building Communities

Embedding AI adoption into an organisation’s culture, through building passionate communities, is what will drive AI forward and continuously improve how it gets used. As part of this, AI adoption shouldn't be seen as a top-down mandate – instead everyone should have a voice. Newer engineers in particular grow into the industry as AI natives. This technology moves so fast and is so new that everyone has valid opinions worth hearing.

These communities should also promote knowledge sharing. Whether through existing communities of practice, online forums, Slack channels, Teams channels, etc., building communities around AI creates space for sharing success stories, discussing challenges and asking questions. To avoid knowledge silos forming, employ a "no stupid questions" culture, creating a space where everyone learns from each other. Otherwise, instead of moving forward as an organisation, only localised progress occurs.

Developing Use Cases

Developing specific use cases helps teams understand the boundaries of AI. There are some cases AI struggles with, and some it handles incredibly well. Tasks which are generally repetitive, somewhat tedious work can be effectively offloaded to AI agents. For example, AI has proven reliable for upgrading codebases from one framework version to another, assuming the upgrade path is well-documented.

However, understanding boundaries remains important. Where does manual intervention still matter? When developing use cases involving AI agents, how much freedom and control should those agents have? Where does a human need to remain in the loop? Defining these boundaries ties back to acceptable use policies.

Tracking Progress

Tracking key metrics is important in ensuring AI is being used effectively, but also that individuals aren’t over-depending on AI without critical thinking. Maintaining the ability to solve novel problems without becoming overly dependent is crucial. Key metrics to track include delivery velocity and quality – as AI adoption increases, hopefully the amount of value delivered increases too, and quality should, at minimum, remain constant.

Summary

Successful AI adoption in engineering requires more than tool access. It starts with clear policies, strong delivery practices and documented standards, moves into carefully chosen tools and realistic training, and succeeds long-term through knowledge-sharing communities, well-defined use cases and consistent progress tracking.

Above all, AI-assisted engineering is not a one-off endeavour. Treating adoption as a continuous process of revisiting foundations, refreshing training and reassessing tooling as the landscape shifts, is what separates organisations that sustain value from those where initial momentum fades.